A surprising amount of useful software is trapped inside binaries nobody can comfortably maintain anymore.

The interesting part of reverse engineering SimCity was not nostalgia. It was learning how quickly a terminal-first workflow can pull stranded capability forward into a modern system. SimCity is just a friendly example. The same pattern applies to orphaned business software, abandoned device utilities, old scientific tools, and firmware-adjacent host software still carrying real operational knowledge.

A packed 1989 DOS executable still invites the wrong kind of ambition. One can spend days pulling pseudo-C out of a 16-bit binary and still be no closer to knowing what matters for a port. The problem is not only technical. It is epistemic. The decompiler can produce a lot of shape before it produces a dependable boundary. What I needed for a macOS rewrite in Rust was narrower and more useful: recover enough truth from SIMCITY.EXE version 1.02 to load a real city, open a native window, and let the prototype tell me which parts of my reverse-engineering story were still fiction.

For stranded software, the fastest way to understand the binary is often to build a living prototype that exposes both what the old system still knows and what your decompiler story still gets wrong.

A decompiler dump was the wrong first milestone

The first lesson was severe and useful: the visible entry point was not the game.

SIMCITY.EXE turned out to be packed and self-relocating. The tiny initial stub existed to move the real program into place. If I had treated that code as the main event and kept translating forward, I would have spent time porting a packer instead of understanding SimCity. The actual first win was identifying the startup stub, following the relocator, and finding the unpacked runtime entry. Only then did the executable stop looking like one undifferentiated block.

That changed the milestone. I no longer wanted a heroic global summary of the binary. I wanted stable boundaries that a modern program could exploit: startup, config, save files, graphics dispatch, viewport movement, and anything else that could make a Rust port do something real before the whole game was "understood."

The toolchain that actually moved the work

The stack that worked was pragmatic, not romantic.

I initially evaluated a Binary Ninja agent bridge, but the free desktop edition could not expose the plugin/API surface the bridge needed. That ended the experiment immediately. The important lesson was not about one vendor versus another. It was that the best reverse-engineering stack for an AI agent is not the one with the prettiest pseudo-C. It is the one that exposes a practical automation surface.

The pivot to Ghidra worked because it fit the way I wanted to operate. Homebrew made installation simple on macOS. Ghidra supported headless workflows. pyghidra-mcp let a terminal coding agent ask narrow questions about symbols, strings, references, memory, and decompilation without turning every step into GUI driving. That was the difference between "using Ghidra" and actually building a repeatable workflow.

I kept one more small weapon in reserve: a custom Ghidra helper, TraceGraphicsStrings.java, that scanned for strings like monodat.pgf and scen.ppf, traced references, and decompiled the first wave of caller functions. That is exactly the kind of glue work AI tooling benefits from. It compresses the distance between "I saw a graphics filename" and "show me the functions that care about it."

On the implementation side, I split the Rust port into separate app, assets, core, renderer, and platform crates. That mattered more than it sounds. Reverse-engineering questions stayed isolated from rendering and input work, which meant the port could keep gaining useful surface area even when the binary was only partially understood.

Stable boundaries beat global understanding

Once the toolchain was live, the next mistake would have been asking it for a grand unified explanation of SimCity. Old binaries do not reward that kind of request. Narrow, iterative questions worked better.

The highest-leverage boundaries were concrete: the unpacked runtime entry, command-line and config handling, graphics backend dispatch, camera movement, SIMCITY.CFG, .CTY save files, and the unresolved PGF / PPF resource containers. Those boundaries could each feed a modern port even if the whole program remained only partially mapped.

File formats paid first. The .CTY parser turned out to be more valuable than another sweep of speculative pseudo-C. The DOS files are 27,248 bytes long, with a 128-byte header, a 120x100 map payload of big-endian 16-bit words, and an on-disk column-major layout that has to be transposed into row-major memory. That is not glamorous knowledge, but it is immediately useful. Once the parser worked, I was not staring at a binary anymore. I was loading Detroit.

A recovered file format is often worth more than another thousand lines of speculative decompilation.

The port became the debugger

This is where the workflow stopped feeling like ordinary reverse engineering and started becoming fun.

As soon as the Rust app could scan an original distribution directory, parse a real city, and paint the map into a framebuffer, every new feature started acting as a diagnostic surface. A native window mattered. A headless .ppm frame dump mattered. A Micropolis-compatible tiles.xpm atlas mattered. The HUD mattered.

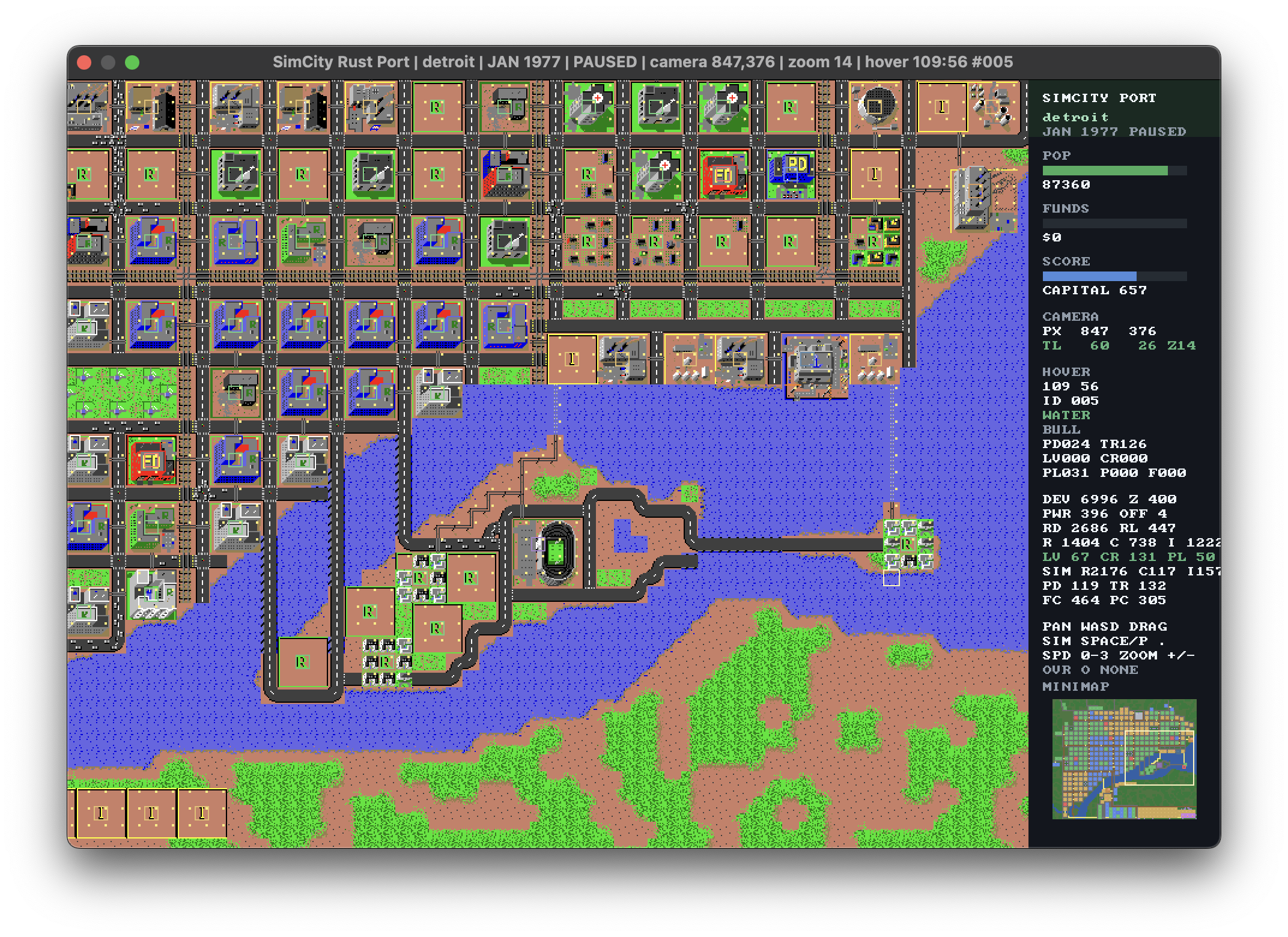

The lead image is not just proof that the port opens. It is an inspection tool. The sidebar carries city state, funds, score, camera position, hover coordinates, tile IDs, overlay mode, and a minimap. That turned the app into something closer to lab equipment than a vanity port.

The overlays were especially valuable. Population density, traffic, land value, crime, pollution, police coverage, and fire coverage were not added as polish. They were added because they made bad assumptions visible. The map storage order was wrong at first. Some tile and power rules were wrong. Traffic was too smeared until the model changed. Those bugs would have survived much longer inside text alone.

A believable city in a macOS window told me more, faster, than another hour of reading pseudo-C in isolation. That is the real inversion. The port was not downstream from the reverse-engineering effort. It became part of the reverse-engineering apparatus.

Recovered logic is not reconstructed logic

This distinction is the ethical center of the whole workflow.

What I actually recovered from the DOS executable is substantial: the packed startup path, the unpacked runtime entry, command-line/config handling, major graphics/backend dispatch, viewport movement, and the structure of .CTY saves plus several important metadata fields. That is real recovered structure.

What remains reconstructed rather than faithfully lifted is also substantial: land value, crime, pollution, traffic, service coverage, zone desirability, valve behavior, and score. The current Rust app is already a useful viewer and simulation sandbox, but it is not yet a completed decompilation of original SimCity logic. The prototype is convincing because it is alive, not because the binary has been solved.

This is exactly where AI-assisted reverse engineering can mislead people. The workflow feels so fluid that progress starts looking more authoritative than it is. A strong prototype can create the illusion that the executable has been fully understood. It has not. It has only been made legible enough to keep going honestly.

Agent-assisted reverse engineering is powerful, but only if recovered facts and reconstructed behavior stay visibly separate.

What else is worth modernizing

This is not a side question. It is the reason the SimCity case study matters at all.

If a packed DOS city builder can yield enough structure in an afternoon to get a serious prototype moving, then the interesting question is what other binaries are trapped behind obsolete operating systems, missing source trees, unsupported hardware, or vendor abandonment. Not every old executable deserves rescue. But many still contain workflows, file formats, and operational knowledge that would be expensive to rediscover from scratch.

The best targets are usually not the most famous ones. They are the ones where the original platform is dead but the behavior still matters.

1. Niche professional tools

Think old CAD packages, municipal planning tools, lab software, medical-office utilities, scientific instruments, mapping systems, and line-of-business apps that still encode real workflow knowledge. If the binary still reflects how people actually work, modernization can be more valuable than replacement. The executable is not just code. It is institutional memory.

2. Embedded firmware and device software

Firmware is an obvious next frontier, with an immediate caveat: only on hardware you own or are authorized to inspect, and only with a safe bench setup. The interesting cases are devices whose electronics still work but whose interface, updater, host utility, or protocol is stranded on an old platform. In those cases, reverse engineering is not only about curiosity. It can restore maintainability, interoperability, and basic control over expensive hardware.

3. Internal software with lost provenance

Many organizations still depend on binaries whose source is incomplete, missing, or effectively unbuildable. A workflow like this can recover enough structure to document file formats, isolate critical logic, and gradually replace the shell around it. That is often more realistic than a ground-up rewrite based on interviews and folklore.

4. Historically important software that deserves a living form

Games still matter here, but not only for preservation. A native rewrite can make an old system inspectable, scriptable, portable, and accessible in ways emulation does not. That matters for teaching, for design research, and for cultural memory. A game like SimCity is interesting partly because it also behaves like infrastructure: it encodes assumptions about maps, systems, simulation, and interface design that are easier to study once they are alive again.

The best reverse-engineering targets are not merely old. They are high-value pieces of stranded capability.

A simple filter helps. A binary is worth modernizing when at least most of the following are true: the workflow still matters, source code is missing or unusable, emulation is a dead end for real use, the file formats or device behavior contain durable knowledge, and there is a plausible path to testing the recovered behavior in a modern shell.

The weak targets are just as important to name. Commodity software with obvious replacements is rarely worth the effort. Safety-critical systems without authorization or verification paths are bad candidates. Anything where legal access is unclear, or where "modernization" really means bypassing security controls, should be treated as a stop sign rather than a challenge.

What this workflow is actually good for

The useful claim is not that an AI agent can "reverse engineer SimCity." The useful claim is that stranded binaries can often be made legible, testable, and replaceable much earlier than teams assume. SimCity is the case study, not the boundary.

What the agent was actually good at was structure, iteration, and glue. It could query Ghidra for narrow facts, follow references, summarize likely boundaries, help write parsers and renderers, and then immediately fold runtime evidence back into the next question. The rhythm mattered more than any one tool: ask a small reverse-engineering question, implement the smallest validating Rust change, add an inspectable output, repeat.

That is why I would start the same way again. I would not begin by demanding a full decompilation of a late-1980s DOS game, an orphaned Windows utility, or a stranded device updater. I would begin by asking what minimum recovered truth would let a modern program expose the rest. The first win is not fidelity. It is building an instrument that makes fidelity attainable.